Automated Testing Makes for Less Risky WMS Rollouts

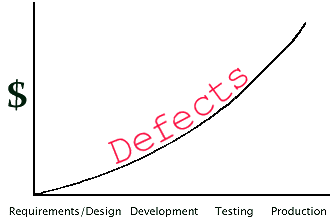

Defects cost time and money, whether it’s a problem in your process or configuration, or more commonly in the form of a software failure or bug. As the graph above shows, defects only get more expensive in terms of downtime(s), labor intensiveness, and reputation damage the longer they persist. There is a key “cost avoidance” metric called defect leakage percentage which measures the how many defects were “leaked” from testing to production, and everyone – except your merciless competition that hate-watches your operation’s every move with bloodlust in their eyes – wants this number to be as low as possible. Consider the ramifications of a software defect that prevents picking found via a nightly test, versus if that same issue was discovered in production after a go live.

Automated testing is, simply put, automating the execution and pass/fail reporting of repetitive tests against a system under test with the end goal of catching potentially costly defects as early as possible. Manual testing is fine for those rare edge cases, visual acceptance testing, and when conducting exploratory testing, but it can be laborious, pricey, and inconsistent if it is performed regularly to test an entire system. Automated testing is faster (more tests run in less time), more accurate, and cheaper while allowing for much greater test coverage and reporting capabilities than manual testing. Whether regression or performance testing, manual testing can’t be solely relied upon to test – and in-turn reduce business risk for – warehouse management systems in a timely manner due to the overall complexity of such a deep, enterprise-wide application.

Reasons for including automated testing in your WMS projects:

|

|

What types of tests are the most ripe for automation?

- Older, repetitive tests that have crystal clear pass/fails for verification and/or validation. A good example would be a test case that tries various username and password combinations for the WMS Web interface.

- Data-driven tests and/or any test that requires lengthy, in-depth preparation. Things like configuring and cleaning up large volumes of test data, or the submissions of lengthy forms with various combinations of input data. Let your test automation solution take the brunt of the repetitive configuration management workload.

- Sanity and smoke tests, especially any typical paths that your users take through the system under test. For example, you should make doubly sure that warehouse personnel can pick the top three best-selling products.

- Time-consuming tests that involve lengthy and repetitive user interactions. Not only is it expensive to tie up a human tester with long, monotonous tests like this, but over time the manual tester becomes glassy-eyed and bored from mindlessly clicking – and in-turn the overall quality of testing drops.

- Performance tests, as the very nature of determining speed, reliability, scalability, and resource usage is what automated tests are perfectly designed to tackle. The process of finding system benchmarks demands the consistency and precision afforded by automated testing. After the big network upgrade, does the WMS crash when a large team of warehouse personnel are all performing receiving, putaway, and picking operations at the same time?

Remember, good test cases have…

- A clear objective with a refined scope

- Obvious and meaningful pass/fail verifications

- Clear and concise documentation

- Traceability to requirements

- Reusability

- Independence from other test cases while testing one thing

- Permutations taken into account by the test case designer

Can your team get away with manual testing just the most critical WMS processes? The answer to that question: It’s risky but…possibly? Plenty of go lives and upgrades have been successful at the end of the day without adequate test coverage, though there was usually some unnecessary nail-biting and at least a few disruptive bumps in the road that could have been otherwise smoothed out in advance. Plenty of rollouts have also bombed due to major defects that reared their ugly head in production which could have been caught earlier with the increased test coverage afforded by automated testing. As profits, as well as jobs, are on the line we think automated testing is worth the extra initial “transitional effort” to drastically reduce business risk. Are you in the market for less risky WMS go lives or upgrades, and/or looking for a test automation solution? Drop us a line!

This post was written by:

James Prior Sales Ops Manager James has been working in software pre-sales and implementation since 2000, and more recently settled into working with a pre-sales team and occasionally writing blog posts. Drop him a line at: james.prior[at]tryonsolutions[dot]com.

Recommended Content

A Guided Tour of Supply Chain Execution Systems

Supply Chain Execution (SCE) systems are behind the process workflows of goods going from procurement to delivery in the supply chain. SCE comes after Supply Chain Planning (SCP), and both are under the umbrella of Supply Chain Management (SCM) systems. In this...

WMS Go Live Checklist Download

Keep in mind that this checklist is a starting point. Adapt it to fit your WMS project's specific needs. Understand your project's unique requirements and customize the checklist accordingly.

Performance Testing Your Warehouse Management System

We’ve made the case for automated testing in various blog articles with a regression testing focus, but we can’t neglect the importance of performance testing. It’s critical for warehouses to determine for their supply chain systems an answer to the question: At what...

Top 5 Ways You Could Botch Your Next WMS Go Live

Are things running too smooth? Have you had it too easy in the supply chain world these days, and want to challenge yourself by botching a warehouse management systems go live? Certainly technology has evolved to the point where we can simply press a button and have...

13 Burning Questions for a Pioneer in Warehouse Modernization

Trevor Blumenau is a professional engineer with a master’s degree in robotics from UC Berkeley and has 25 years of R&D experience in warehouse/manufacturing processes, controls, and innovation. Trevor founded Voodoo Robotics to modernize warehouse operations by...